My AI Practice Went From 6 Iterations to Push-Button in 21 Days

A governed workspace turned a favor into a four-tier service.

A friend asked me to review a grant proposal. Small arts nonprofit, first application to a major foundation, tight deadline. I said yes as a favor — no engagement, no pricing, no templates. Just twenty years of grant experience and an AI workspace that already had evaluation scaffolding from prior projects.

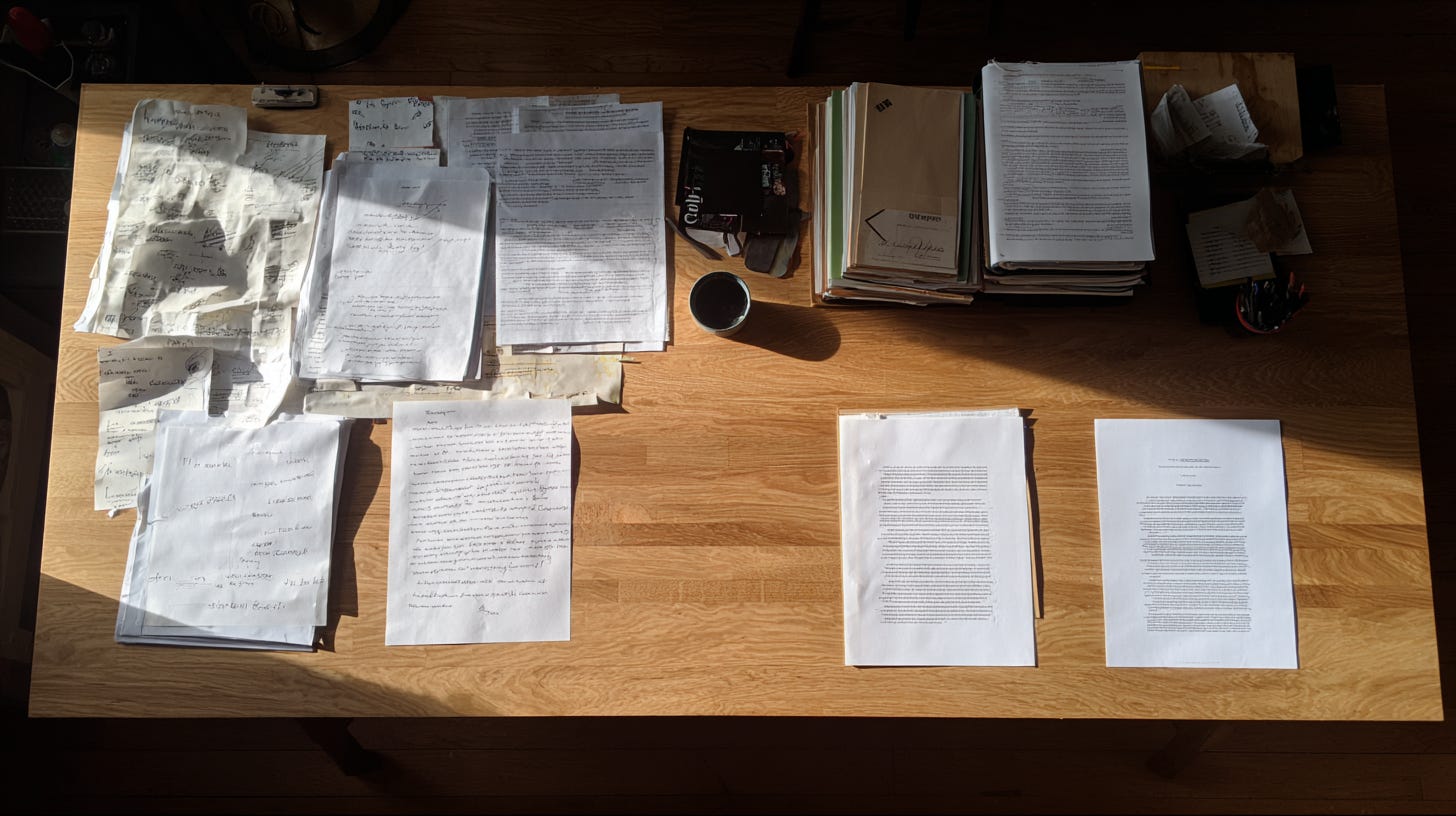

The first package took 30 minutes of my time. Three iterations on the evaluation — a SWOT analysis, criteria scoring, and a pre-submission checklist. Three more on the recommended rewrite. Six total iterations, each one bespoke. The deliverable scored the proposal at 7 out of 10 with specific, fixable gaps identified.

Thirty minutes for a multi-section evaluation package. At $750, that’s $1,500 per hour — well above the grant consulting market rate of $100–250. The time was the question — and whether it would hold across a second engagement.

The Friction

The first evaluation was artisanal. Every section header crafted in real time. Every scoring rationale written for that specific proposal. The SWOT analysis structured around that nonprofit’s particular circumstances. It worked because I have two decades of pattern recognition in grant funding — I know what review panels look for, where proposals typically fail, and which weaknesses are fixable in a revision cycle. But all of that knowledge lived in my head, expressed fresh each time. Nothing from the first delivery made the second one faster.

I was genuinely fast. And the practice didn’t compound.

The Build

What happened over the next 21 days wasn’t a product launch. It was a series of engagements that each deposited something into the infrastructure.

Day 1 — the favor

The arts nonprofit evaluation produced the first working package: a SWOT, criteria scoring, and a rewrite. Six iterations. Thirty minutes. No templates. Everything built in the workspace, nothing reusable yet.

Week 1 — pricing and first constraint lock

The 30-minute delivery time validated the price point. I launched two tiers: a standalone evaluation at $350 and a full package (evaluation plus rewrite plus ask list) at $750. Founding client rates, capped at ten engagements. The rate only held if the delivery time held.

Week 2 — the second engagement broke the template

An education nonprofit needed an evaluation. Different sector, different funder, different proposal structure. I expected the second engagement to validate the template. It broke it instead. The evaluation framework covered ten sections. The education proposal exposed two gaps: no adversarial lens (what would a hostile reviewer flag?) and no editorial check (the small errors that signal sloppiness to a review panel). The standard expanded from ten sections to twelve — a fixed schema with scoring logic for each section. The template expanded under pressure.

The constraint file locked the twelve-section standard after the second engagement. Everything else moved. This didn't.

Week 3 — template lock and tier expansion

After the second engagement, I locked the templates: branded deliverables, standardized section headers, build scripts that enforced the twelve-section standard. A constraints document formalized what the service would and wouldn’t do — including a rule that no new section could be created during delivery. If the schema didn’t cover it, it waited for the next infrastructure pass.

Then two new tiers emerged from conversations, not planning. A prospective client needed to know whether their proposal was even competitive before investing in a rewrite — that became a fit assessment at $450. Another client didn’t have a proposal yet — they needed to know which funders to target and why. That became a strategic funder pipeline at $750, delivering 25 screened funders narrowed to 9 with strategy context.

Both new tiers delivered in ~30 minutes. Not because I designed them that way, but because the infrastructure had compressed the decision-making to the point where delivery was execution, not invention.

**Final state:** Four tiers, $450 to $1,750, all 30-minute deliveries. Effective rates between $900 and $3,500 per hour. Delivery wasn’t the constraint. Demand was.

The Insight

Delivery Compression is what happens when decisions stop being made during delivery.

Each engagement deposits reusable artifacts — templates, build scripts, evaluation standards, constraints — into the practice infrastructure. Each artifact eliminates a category of decisions that used to be made fresh every time. Delivery time drops until it asymptotes at the irreducible core: the expertise itself.

Compression is not automation. Automation replaces the human. I’m still evaluating every proposal, still applying twenty years of pattern recognition, still making judgment calls about what a review panel will flag. What I’m not doing is deciding how to structure the deliverable, what sections to include, or what the intake requirements should be. Those decisions were made once, tested twice, and locked.

It’s not productization. Productization standardizes the output — same deliverable, same format, same scope. Compression removes the decisions required to produce the output. My four tiers look different, serve different purposes, and answer different questions. What they share is the same decision architecture.

And it’s not scaling. Scaling adds capacity. Compression reduces the cost per unit of expertise applied. At 30 minutes and one practitioner, I’m not scaled. I’m compressed.

The first two engagements are expensive. The third is where it breaks. The templates hold. The build scripts work. The constraints absorb the new case without expanding. If delivery time doesn’t drop after the third engagement, you’re not compressing — you’re just organizing.

The counterfactual is specific. Without the infrastructure deposits from the first two engagements, the fourth engagement — the funder pipeline — would have taken hours to scope, price, and deliver. Instead it took 30 minutes, because every structural decision had already been made. The pipeline tier didn’t require new architecture. It required applying existing architecture to a new surface.

The Honest Part

Twenty-one days is fast for a four-tier service. But the 21 days had 20 years behind them. The grant evaluation expertise — knowing what review panels look for, how foundation and government funders differ, which proposal weaknesses are fatal vs. fixable — that wasn’t built in three weeks. The AI compressed the delivery of that expertise. It didn’t generate the expertise itself.

The 30-minute delivery time benefits from a specific kind of domain. Grant proposals are structured documents with well-understood evaluation criteria — scoring rubrics, required sections, common failure modes. The templates work because the domain has shared standards. Whether this compression curve applies to domains with fuzzier deliverables — strategy consulting, creative direction, organizational design — is untested.

The pricing works at this effective rate because demand is low. The math changes when demand exceeds what one practitioner can absorb. The first thing that breaks isn’t delivery time — it’s quality consistency. The templates and build scripts transfer to a second evaluator. The judgment calls about which weaknesses are fatal versus cosmetic might not. And compression stops when new engagements no longer modify the infrastructure — which means the first proposal that falls outside the twelve-section structure spikes delivery time back to artisanal levels. The schema is the ceiling.

What This Is Actually About

Prior case studies in this series deposited specific artifacts: a constraints template, a decision log pattern, an adversarial evaluation workflow, a multi-tool orchestration protocol. This one adds the Delivery Compression pattern — a practice architecture where each engagement makes the next one faster by depositing reusable artifacts into the infrastructure.

CS1 proved an AI workspace could build a data product in a single session. CS4 proved a structured adversarial loop could harden a high-stakes deliverable. CS5 proved that pre-existing artifacts could combine into an unplanned product. This case study shows what happens when that infrastructure faces paying clients: six iterations collapse to one, and the economics follow.

But compression has a blind spot. It measures whether delivery is getting faster. It doesn’t measure whether the infrastructure underneath is getting smarter — or just getting bigger. If you can’t tell the difference, your system is accumulating, not compounding.

Case Study Insight: Delivery Compression is what happens when decisions stop being made during delivery — each engagement deposits artifacts that eliminate re-decision cost, and delivery time drops to the irreducible core of the expertise itself.

Robert Ford builds products, writes stories and essays, and publishes The Intelligence Engine — a Substack about building AI practices that compound. His other writing lives at Brittle Views.