I Built an Automated Events App in Two Days. The Interesting Part Isn’t the App.

A real build log from a governed AI practice.

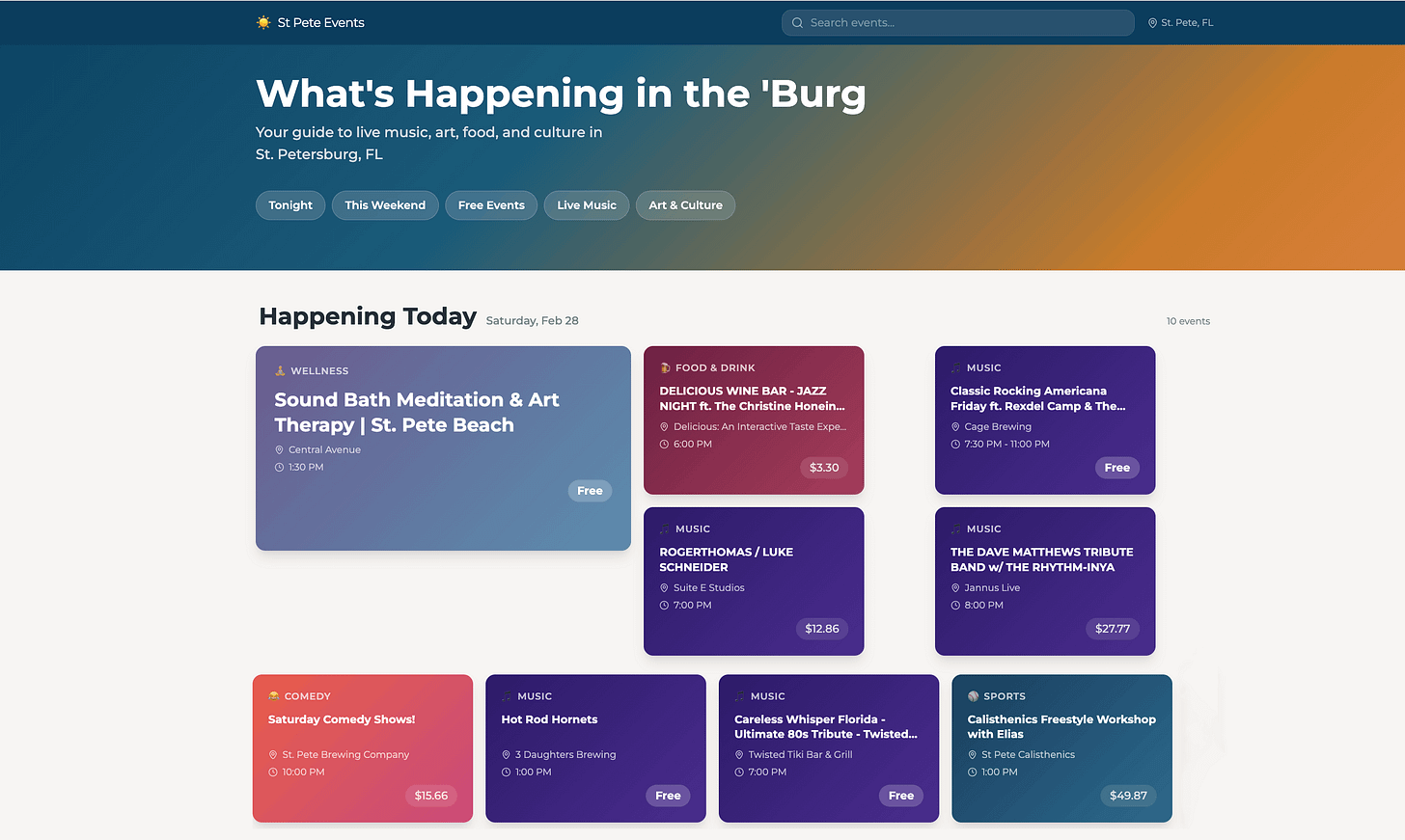

Two days ago, I decided to build a local events directory for St. Petersburg, Florida. By this morning it was live — 873 events across 22 venues, auto-refreshing every three hours, with category filtering, venue pages, and a visual identity that someone might actually use.

If this were a normal “I built X with AI” post, I’d walk you through the prompts. I’d tell you which model I used. I’d imply you could do the same thing this weekend.

I’m not going to do that. Because the prompts don’t matter. What matters is why session three could build on session two, why session five could audit work from session three, and why the whole thing didn’t collapse into the Typist Trap pattern: exciting first draft, slow decay, abandoned project.

The app is real. You can visit it. But the app is the proof, not the point.

What Actually Happened

Sessions 1–2 were manual and messy. Scraping venue websites through a browser, extracting event data by hand, injecting SQL one statement at a time. By the end I had 262 events across 13 venues — functional, but brittle. The kind of output that impresses for an afternoon and becomes a maintenance burden by Tuesday.

I also had the familiar feeling: I’d made dozens of small decisions — which venues had usable event pages, which date formats parsed correctly, which categories made sense — and none of them were recorded anywhere. If I closed the session, all of that judgment would evaporate. The next session would start from zero.

This is where most AI projects stall. By the third session, you’re paying the Amnesia Tax — spending more energy on context recovery than on building.

Session 3 was the inflection point. While reviewing the venue profiles logged in previous sessions, I discovered that Eventbrite embeds structured data in its page source — venue IDs that unlock an API endpoint returning every upcoming event for that venue. What had been hours of manual scraping per venue — linear, one site at a time — became a single automated call across every mapped venue. One Edge Function, 64 events upserted in seconds.

That discovery only happened because session two’s venue research was logged — including the dead ends.

Session 4 was infrastructure. Date format bugs. A recurring events strategy. Data source classification for every venue. Not glamorous. Entirely necessary. The decision that matters most from this session: log every venue you investigate, even the dead ends. One line in a database — “SKIP: EventPrime plugin, no public API” — means no future session wastes an hour re-investigating a venue that was already ruled out.

That’s institutional memory. A session-by-session workflow throws away failed research. A governed system makes it permanent.

Session 5 was the compound session.

I audited categories across all 873 events and reclassified over 40 of them — using the classifier from session three as a starting point, not building a new one. I redesigned the frontend after studying how Do512, Time Out, and The Infatuation handle event discovery. I deployed four functional upgrades and set up three automated jobs: event fetching every three hours, scraper runs every six, cleanup of past events at 3 AM.

The category audit referenced session three’s classifier. The venue pages used addresses backfilled in session two. The automation built on the Edge Functions from session three. A day that was only possible because nothing before it was lost.

**Session 6:** the project had its own data pipeline, its own automation schedule, its own standing policies, and was generating decisions faster than the parent workspace could track — its log entries were crowding out other projects’ context. It graduated to its own workspace — fourteen policies consolidated into a dedicated operating document. The system recognized its own growth.

Why This Didn’t Collapse

Every AI build has the same failure mode: Intelligence Leaks — context loss between sessions.

This build avoided that because it ran inside a governed workspace — a system where every project has three things most AI workflows lack:

Constraints that persist.

Rules like “use short month date format” or “log all investigated venues, even non-viable ones” are written once and enforced in every subsequent session. They don’t drift.

Decisions that accumulate.

Every choice gets logged with context: what was decided, what alternatives were considered, what consequences follow. Session five references session three’s reasoning without anyone needing to reconstruct it.

Sessions that build on each other.

Session three’s Edge Function depends on session two’s venue profiles. Session five’s classifier references session three’s.

The AI doesn’t get smarter between sessions. The system around it does.

The Honest Part

The workspace system that governed this build — the constraint files, the decision logs, the session protocols — took months to develop. Two days is real, but it’s misleading if you read it as “start from nothing.” Without that infrastructure, this is a three-week project with the usual mid-build crisis where you realize you’ve been re-explaining your own decisions to a machine that doesn’t remember making them.

The methodology is transferable. The speed is not — not immediately.

And the app isn’t finished. Mobile isn’t optimized. Search doesn’t exist yet. Some venue scrapers still need building. “Built in two days” means “reached production in two days,” not “completed.”

What This Is Actually About

The automated jobs are running right now. The venue database is growing. The constraints file has fourteen standing policies that will govern the next session, and the one after that, without anyone needing to re-explain them.

That’s the difference between a project and a party trick. A project compounds.

The question is whether anything you build with AI survives contact with next week.

I’m turning the full methodology — the workspace system, the governance model, the protocols that made this build possible — into a course called Stop Starting Over With AI. If this resonates, there’s more coming.

In the meantime: the next time you start an AI session, notice whether it builds on the last one.

If not, you already know what’s leaking.

Robert Ford builds products, writes stories and essays, and runs six concurrent AI-assisted projects using a governed workspace system. His other writing lives at Brittle Views.